🌟 Editor's Note: Recapping the AI landscape from 03/02/26 - 03/16/26.

🎇✅ Welcoming Thoughts

Welcome to the 1st / 34th edition of KPAI Weekly.

What’s included: company moves, a weekly winner, AI industry impacts, practical use cases, and more.

Welcome back!

Kind of a quiet news cycle across the last two weeks (comparatively).

I like this new system of reviewing older Practical Use Cases.

Uber founder Travis Kalanick is back. Double dipped on that in the Interview Highlight and Startup Spotlight.

ChatGPT and a few others approved for use in U.S. Senate work.

Meta acquired Moltbook - The AI agent social network.

Anthropic put out a report on the effect of AI in different markets.

NVIDIA put out their ‘State of AI’ report. Tracks AI use across industries, companies etc. Pretty interesting.

Anthropic is doubling Claude usage limits in non-peak times over the next few weeks. Nice.

Google released a tool to help predict natural disasters. Super interesting.

Let’s get started—plenty to cover this week.

👑 This Week’s Winner: NVIDIA

NVIDIA is back and stretching the lead. Between a trillion-dollar demand projection, one of the biggest compute partnerships in AI, and a telecom deal that pushes AI closer to the edge, NVIDIA had another week that reinforced just how much of the stack runs through them. Here’s the recap:

$1T Demand? Jensen Huang believes NVIDIA’s chip revenue will reach roughly $1T by 2027, arguing that inference, agents, and real-time AI services are expanding demand far beyond the original training boom. Wow.

Thinking Machines Deal: NVIDIA formed a major partnership with Thinking Machines Lab, including a significant investment and a gigawatt-scale compute agreement to support frontier model training. Thinking Machines was founded last year by former OpenAI CTO.

T-Mobile + Nokia Edge AI Expansion: NVIDIA, T-Mobile, and Nokia are pushing their partnership into physical AI, using 5G infrastructure as a distributed edge compute platform for real-time vision and reasoning agents. NVIDIA is in a great place for ‘Physical AI’.

NVIDIA also kicked off its annual developer conference, GTC 2026, by unveiling DLSS 5, which it described as its biggest gaming graphics breakthrough since real-time ray tracing. The company also introduced NemoClaw, an open-source stack designed to help developers run always-on autonomous agents more safely.

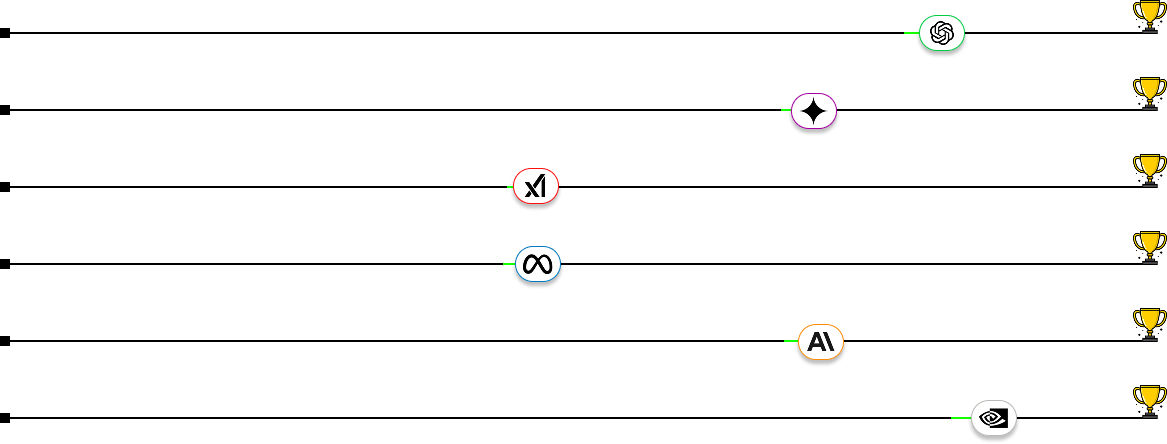

From Top to Bottom: Open AI, Google Gemini, xAI, Meta AI, Anthropic, NVIDIA.

⬇️ The Rest of the Field

Who’s moving, who’s stalling, and who’s climbing: Ordered by production this week.

🟢 OpenAI // ChatGPT

Excel GPT + 5.4 Release: OpenAI launched GPT-5.4 for professional work and paired it with ChatGPT for Excel, reinforcing a clear push into spreadsheet-heavy, finance, and knowledge-work workflows. Nice, 5.4 is ok, but I’d like to try out GPT in Excel.

Promptfoo Acquisition: OpenAI is acquiring Promptfoo to add automated red-teaming, security testing, and compliance monitoring to its agent platform, strengthening the case for safer AI deployments. Will help detect and fight some prompt injection concerns.

Coding + Enterprise Strategy Shift: OpenAI is reportedly narrowing its focus toward coding and enterprise customers, cutting out side projects, and negotiating a Joint Venture with top Private Equity firms. Smart.

🟠 Anthropic // Claude

Revenue Doubling: Anthropic’s annualized revenue pace is reportedly approaching $20B, driven largely by enterprise usage. Up from $9B at the end of 2025. No surprise here.

Claude Partner Network: Anthropic committed $100M to launch a partner program aimed at scaling enterprise adoption through consultants and system integrators. Everyone is going after enterprise, but OpenAI and Anthropic are throwing everything at it right now.

Anthropic Institute: Anthropic introduced a new in-house group focused on AI safety, policy, and societal impact as its models become more powerful. Anthropic is nothing if not consistent, move follows core messaging.

🔵 Meta // Meta AI

New Custom Chips: Meta unveiled four new custom AI chips — MTIA 300, 400, 450, and 500 — as part of a faster release cycle meant to reduce reliance on outside GPU suppliers. I like this a lot. Following Google’s lead of creating some specialized chips internally.

News Corp Licensing Deal: Meta reportedly signed a multi-year AI content deal with News Corp worth up to $50M per year, securing higher-quality publisher material for its AI systems. Follows similar ‘news’ deals Meta has made, I wonder what their long-term play is here.

Avocado Delay + Layoff Rumors: Meta’s next major model, Avocado, was reportedly pushed to at least May 2026, while separate reports say Meta is considering cutting 20% of its staff to offset AI spend. Unfortunately layoffs are probably the right move here. On the delay, is Meta ever gonna release this new AI model?

🟣 Google // Gemini

$32B Wiz Acquisition Closes: Google finalized its largest-ever acquisition, strengthening its cloud security layer as AI drives more sensitive workloads into the cloud. Been in the works but now it’s official.

Gemini Embedding 2: Google launched its first multimodal embedding model, mapping text, images, audio, video, and more into one system, making it easier to build advanced search and retrieval across mixed data. Cool move - plays well for ‘Physical AI’.

Gemini in Workspace: Google rolled out deeper Gemini integration across Docs, Sheets, Slides, and Drive, turning Workspace into a more AI-native productivity suite. This has actually been a bit annoying. Glad it’s integrated but you should be able to click a “I’m already using the AI tools!” button.

🔴 xAI // Grok

xAI rebuild: Musk said xAI “was not built right the first time” and is now being rebuilt from the ground up, following major cofounder exits and concerns around Grok’s coding progress. Interesting.

Finance hiring for Grok: xAI is reportedly hiring bankers, traders, and credit specialists to train Grok on real finance workflows like leveraged loans, distressed debt, and structured credit. Smart play. Specialized AI is a winner, even for larger models.

Tesla converts xAI stake: Tesla reportedly got government clearance to turn its xAI investment into a small SpaceX stake, tightening the links across Musk’s companies. Curious to see how Elon’s companies come together operationally in 2026.

📢 Impact Industries 🏥

Advertising // Agent-to-Agent Deals

Comcast's FreeWheel launched an AI agent infrastructure using the Model Context Protocol for premium streaming and CTV ad transactions. The system allows marketing operating systems to plug directly into FreeWheel’s platform. These agents autonomously negotiate, buy, and optimize video ad deals with near-zero latency. This infrastructure moves agentic AI from demonstration to productized reality, establishing a new technical standard for fully automated negotiation in premium video. It won't be all at once but we're starting to see an internet shifting towards agentic operators.

Read the Story

Healthcare // AI-Assisted Surgery

Perimeter Medical just won FDA approval for Claire, an AI-enabled imaging device used during live breast cancer surgery. Unlike back-office software, this tool helps surgeons inspect tissue margins in real-time to ensure no cancer is left behind. By providing instant procedural decision support, Claire aims to eliminate the need for grueling follow-up operations. This regulatory milestone proves AI is moving from workflow pilots into real-time clinical applications. One of the first cases I've seen of AI actually being implemented in the room versus reacting to post-op scans or data. Cool!

Read the Story

💻 Interview Highlight: TPBN with Travis Kalanick

Interview Outline: This interview explores the "efficiency of atoms" and how AI is being used to solve real-world friction in the physical food and logistics industries. Kalanick discusses the operational challenges of "Cloud Kitchens," focusing on predictive cooking and minimizing "dwell time" to ensure food arrives hot and fresh. He emphasizes that the biggest AI opportunity isn't in flashy chatbots but in the "un-glamorous efficiency" of massive physical industries.

About the Interviewee: Travis Kalanick is the CEO of Atoms and the co-founder of Uber. He specializes in building "real-world" technology companies that use software to optimize physical infrastructure, delivery, and logistics at a global scale.

Interesting Quote: "The edge isn't the model; it’s the data from your own operations."

My Thoughts: We’ll dive a bit more into Travis Kalanicks new startup in the spotlight below but I think this was an interesting representation of AI in the physical world. While ‘Atoms’ doesn’t refer to molecular science, Travis breaks down how AI is used in everyday tasks such as food preparation and delivery. The above quote is relevant across industries and an important one to remember.

Condensed Interview Highlight — Travis Kalanick

Host: Why is it so much harder to apply AI to physical "atoms" than to digital "bits"?

Travis Kalanick: When you are dealing with bits, if the software fails, you just refresh the page. In the world of atoms, if the system fails, a burger is cold, a driver is lost, or a facility is backed up. You cannot "undo" a physical mistake, so the AI has to be much more integrated into the actual operational flow.

Host: How does the AI actually function inside the kitchen?

Travis Kalanick: We use it for predictive logistics. The system predicts exactly when an order should start being cooked so that it finishes at the same moment the delivery driver pulls up. The goal is a perfect handoff where the food is at its absolute peak freshness.

Host: Does AI replace the need for strong human managers in these kitchens?

Travis Kalanick: No, it actually empowers them by making "invisible" problems visible. AI gives you the data to see if a kitchen layout is inefficient or if a specific route is always bottlenecked. It highlights the friction so a human manager can step in and actually fix the process.

Host: How do you maintain a competitive edge when everyone uses the same AI models?

Travis Kalanick: The edge is not the model itself; it is the data from your own operations. If you have unique data on how millions of orders move through a physical space, you can train AI to optimize your specific workflow in a way that a generic model never could.

Host: What should founders know about building software for the physical world?

Travis Kalanick: You have to realize that you cannot just hand-wave away real-world friction. Software for physical spaces has to be built from the ground up to respect the messy reality of logistics, facilities, and human behavior.

👨💻 Practical Use Case (Issue 02 Revisited): Deep Research

Difficulty: Basic

If you listen to or read the noahonai interview this week, you’ll get a taste of how powerful AI’s deep research functionality can be when it’s executed right.

Deep research works by pulling in hundreds to thousands of primary sources—news articles, research papers, blog posts, forum responses—and then running dozens to hundreds of follow-up queries across those sources to fill in gaps, summarize perspectives, or extend threads. It’s not just a summary of the top 10 search results. It’s full-scale, multi-threaded investigation.

What makes deep research so powerful is the concept of time. You could have the smartest, most efficient person in the world sitting next to you, and it would take them days to match what deep research can do in a few minutes. It’s an interesting framework when thinking about AI use cases, some are about productivity, some are about creativity, but almost all of them save us the one thing we can’t get back: time.

Sergey Brin, cofounder of Google, stepped away from his company for a few years and rejoined for the AI race in 2023. Here’s what he said about deep research:

“By default, when you use some of our AI systems... it'll suck down whatever top 10 search results... I could do that myself... But if it sucks down the top, you know, thousand results and then does follow-on searches for each of those and reads them deeply—that’s, you know, a week of work for me. Like, I can’t do that.”

Effectiveness by Company:

Grok and Perplexity are definitely the fastest, they’ll do the job in 3-4 minutes, but it often feels surface-level, or some details are askew. Gemini is very good, but sometimes it’s almost too much, and I find myself needing to sift through to make sense of it all.

GPT generally hits the sweet spot for me. It seems to pull in 90% of what Gemini does, but it presents it in a cleaner, more focused way, especially if you’re trying to build a tight argument or summary. For shorter projects, I’d recommend ChatGPT, for longer projects I’d recommend a combination of GPT and Gemini deep research.

Issue 34 Update: A lot of people know about deep research now, but it is still one of the top AI use cases for me. What I like to do these days is run 4 DR sessions simultaneously (Generally Claude, Gemini, GPT, and Grok), then combine all of that in to one document. Place that document back into Claude, and ask it to put together a concise new version with an emphasis on the data that was similar across the different searches. Claude is my top Deep Researcher, Gemini 2, GPT 3, and Grok 4. GPT changed their output structure and I don’t like it. Still waiting on the specialized deep research API from Claude and Grok in order to fully automate this process.

🦾 Startup Spotlight

Atoms

Atoms — Gainfully Employed Robots for the Physical World.

The Problem: While AI has mastered digital tasks like writing and coding, the "physical world" remains largely un-digitized. Industries like mining, heavy transport, and large-scale food production still rely on manual labor or "dumb" machines, creating a massive bottleneck in global productivity and safety.

The Solution: Atoms builds specialized, task-oriented robots rather than general-purpose humanoids. Their strategy revolves around a "wheelbase for robots,” a standardized mobility platform that can be outfitted for different industrial jobs. The goal is to create machines that are "gainfully employed," meaning they perform high-cycle, productive work in mines, kitchens, and warehouses to drive down the cost of physical goods.

The Backstory: Founded by Uber co-founder Travis Kalanick, Atoms is the rebranded evolution of his holding company, City Storage Systems. The company operated in total stealth for eight years with thousands of employees who weren't even allowed to list the company on LinkedIn. It has now officially absorbed CloudKitchens (food infrastructure) and Pronto (autonomous mining/transport) into a single robotics powerhouse with major backing from Uber and the Saudi PIF.

My Thoughts: Found this very interesting not just because of the nature of the company but also the founder backstory. On the website, Travis details how investors ripped Uber away from him at a vulnerable time in his life. Atoms is on par with the movement of AI into the physical world and I’m excited to follow the company. I think this is the way to go with robotics, as we’re already seeing it in warehouses. Rather than a one size fits all humanoid bot, having robots that can perform highly specialized tasks that will be automated over a long period of time. I have no doubt it will end up being a success.

“It’s not likely you’ll lose a job to AI. You’re going to lose the job to somebody who uses AI”

- Jensen Huang | NVIDIA CEO

Anyone using AI for their March Madness brackets? Till Next Time,

Noah from KPAI