🌟 Editor's Note: Recapping the AI landscape from 03/17/26 - 03/23/26.

🎇✅ Welcoming Thoughts

Welcome to the 35th edition of KPAI Weekly.

What’s included: company moves, a weekly winner, AI industry impacts, practical use cases, and more.

Another week another Anthropic Study, they surveyed 80k people on how they use AI. Pretty cool.

NVIDIA CEO claimed AGI, but not really, see the interview highlight, “My thoughts” section specifically.

Looks like Russia may be restricting some AI tools like Gemini and Claude.

Strong week across the board.

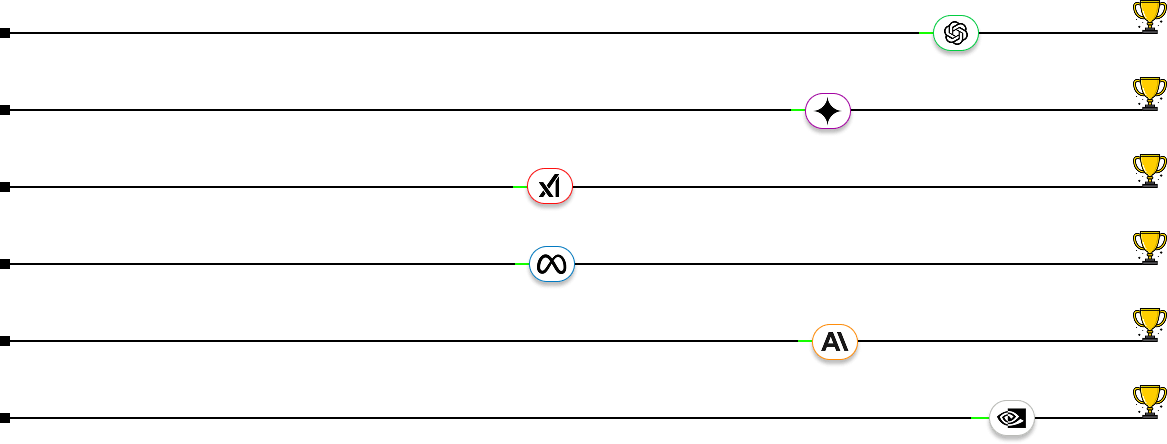

Essentially a tie across all race participants not named NVIDIA.

Changed up my process to use Claude with newsletter prep instead of GPT.

Anthropic is launching some cool stuff. I would’ve loved to have Dispatch when I was coding more (see below).

Time to start using OpenClaw now that NemoClaw (NVIDIA’s safety net) is available.

Let’s get started—plenty to cover this week.

👑 This Week’s Winner: NVIDIA

NVIDIA finished up GPT (GPU Tech Conference) with a strong showing. Between a full next-gen platform built for the agentic era, a dedicated inference chip, and a push into orbital compute, they won a 2nd straight week. Here's the recap:

Vera Rubin Platform: NVIDIA previewed their full-stack agentic AI platform comprising seven chips, five rack-scale systems, and one integrated supercomputer. Initial systems shipping to tier-one cloud providers in H2 2026. Best of the best.

Groq 3 LPU Integration: NVIDIA introduced the Groq 3, a dedicated SRAM-packed inference chip that joins the Vera Rubin platform to handle sensitive workloads that GPUs aren't optimized for.

Space Data-Center: NVIDIA previewed an orbital data center compute module delivering 25x more AI compute than previous space-rated H100 GPUs. Already being adopted by CERN and Planet Labs. This is very cool, still a few years+ off, but space data centers are the future.

That’s not all: NVIDIA also launched BlueField-4 STX, a new storage system built to keep up with the data demands of always-on AI agents. Uber announced it will deploy NVIDIA's self-driving software across 28 cities on four continents by 2028. NVIDIA partnered with Emerald AI to build AI data centers that can feed energy back to the power grid. And Jensen Huang previewed NVIDIA’s next-generation Feynman architecture, slated for 2028.

From Top to Bottom: Open AI, Google Gemini, xAI, Meta AI, Anthropic, NVIDIA.

⬇️ The Rest of the Field

Who’s moving, who’s stalling, and who’s climbing: Ordered by production this week.

🔴 xAI // Grok

TeraFab Launch: Elon unveiled TeraFab, a $25B joint chip factory with Tesla and SpaceX targeting one terawatt of annual AI compute, with a prototype facility starting in Austin. Brings xAI/SpaceX closer to Tesla, which I think is a positive for general direction.

Grok Computer: xAI confirmed the imminent launch of "Grok Computer," a computer-controlled agent that pairs Grok's reasoning with a Tesla-developed agent for real-time screen and keyboard control. We move further into the age of Agentic AI.

Goodbye Free Tier: xAI quietly paywalled Grok Imagine's free video and image generation features, with paid SuperGrok users also reporting reduced quotas. Probably for the best, should cut down on harmful images + lawsuits.

🟢 OpenAI // ChatGPT

Astral Acquisition: OpenAI announced it will acquire Astral, the startup behind foundational Python tools, to integrate into its Codex ecosystem which now has over 2 million weekly active users. Will help with the race against Claude Code.

New File Library: OpenAI rolled out a persistent file storage feature for ChatGPT, allowing uploads to be saved and referenced across future conversations for Plus, Pro, and Business users. Nice, helpful if you’re uploading multiple files to GPT across chats and projects.

Private Equity Offer: OpenAI is offering private equity firms a guaranteed minimum 17.5% return to attract investment into enterprise joint ventures, significantly above typical deal terms. Wow. Strong enterprise offer, especially given how much money OpenAI is losing annually.

🔵 Meta // Meta AI

CEO AI Agent: Mark Zuckerberg is reportedly building a personal AI assistant to help run Meta, designed to surface decisions and flag signals across teams without going through management layers. Pretty cool. I wonder if he’s using Meta models or outside tech. /s

AI Content Enforcement: Meta rolled out new AI-driven content moderation systems that detect roughly 2x more solicitation content while cutting error rates by over 60%. AI is great but it leads to tons of opportunity for scammers. Good move Meta.

Manus Desktop Launch: Meta's Manus launched its desktop app for macOS and Windows, introducing "My Computer" a local AI agent that can read, edit, and organize files directly on-device. Cool, somewhat similar to Claude Cowork.

🟣 Google // Gemini

Stitch AI Update: Google launched a major update to Stitch, its AI design tool, introducing "vibe design" with voice commands, an infinite canvas, and instant prototyping, Figma shares dropped 4-8% on the news. Nice. I still think we’re not there yet with “vibe design”, I still use Figma, but progress is progress.

More Personal Intelligence: Google expanded its Personal Intelligence feature from paid-only to all free US users, connecting Gemini to Gmail, Photos, YouTube, and Search for personalized responses. Not sure we need this yet, but now available for everyone to use.

Agentic Matching: Google integrated Gemini into the Google Marketing Platform and YouTube, allowing marketers to input a brief and have the AI autonomously match them with content creators. Very cool.

🟠 Anthropic // Claude

Music Lawsuit: BMG Rights Management sued Anthropic alleging it used copyrighted lyrics from artists including Bruno Mars, Ariana Grande, and the Rolling Stones to train Claude, citing 493 compositions. Many such cases.

Claude Dispatch: Anthropic launched Dispatch as a research preview within Cowork, enabling users to control desktop AI tasks remotely from their phone with a persistent conversation that picks up where you left off. Very cool! Somewhat similar to OpenClaw. I’ll be using it.

Pentagon Legal Escalation: Anthropic filed sworn declarations pushing back on the Pentagon's "supply chain risk" designation, with retired judges also filing a brief in the company's support ahead of a March 24 hearing. This could last a while.

🤖 Impact Industries 🎓

Robotics // MIT Wave-Former

MIT researchers unveiled Wave-Former, a system that lets robots reconstruct the 3D shape of objects hidden behind walls and fabric using radio signals paired with a generative AI model. It achieves 20% better accuracy than previous methods. A companion system called RISE reconstructs entire room layouts using a single radar - no cameras needed. Applications range from warehouse robots verifying sealed packages to smart-home robots understanding occupant positions without surveillance. Privacy-preserving perception is a big deal. One of the more novel robotics papers I've seen lately.

Read the Story

Education // University of Houston AI Deployment

The University of Houston announced a campus-wide AI deployment giving all students, faculty, and staff access to Gemini for Education and NotebookLM with a critical constraint: total data sovereignty. Research data and IP remain within UH's ecosystem and are never used to train public models. NotebookLM operates as a source-grounded assistant that only responds to user-provided materials with direct citations to prevent hallucinations. With 140+ faculty leading $70M+ in AI research, this offers a replicable blueprint for universities balancing adoption with IP protection.

Read the Story

💻 Interview Highlight: Lex Fridman with Jensen Huang

Interview Outline: The interview explores the shift from general-purpose computing to accelerated computing and the emergence of generative AI. Jensen discusses the concept of the "AI Factory," where data and energy are turned into intelligence, and explains why he believe we are entering a new industrial revolution. He also touches on NVIDIA’s unique corporate culture, the future of robotics, and why natural language is becoming the ultimate programming language.

About the Interviewee: Jensen Huang is the co-founder and CEO of NVIDIA. He is widely credited with pivoting the company from a graphics chip manufacturer to the powerhouse behind the modern AI era through the development of the GPU and CUDA platform.

Interesting Quote: "Intelligence is the new electricity. It is the fuel for the future economy."

My Thoughts: The concept of intelligence as an asset is pretty interesting to me. It makes a lot of sense when you think about it. Essentially contracting the world’s top researcher/scientist/expert in whichever given field. Also it’s worth noting that in this interview Jensen said he believes we’ve already reached AGI. The definition of AGI that he based that answer off of is AGI being an AI that can run a large company on its own. Not the popular AGI definition of an AI that can train itself at scale. Still a ways to go in that department.

Condensed Interview Highlight — Jensen Huang with Lex Fridman

Lex: You talk about AI as an industrial process. What does that actually mean?

Jensen Huang: In the last industrial revolution, we used water and electricity to power machines that produced physical goods. In this new revolution, we use data and energy to power "AI factories" that produce intelligence. Every company will eventually have an AI factory that takes in their raw data and outputs tokens of intelligence to run their business.

Lex: What is the next frontier for AI beyond chatbots?

Jensen Huang: The next wave is Physical AI—robotics. This requires AI that doesn't just understand language but understands the laws of physics. We use the "Omniverse" to create digital twins where robots can learn in a simulation millions of times before they ever enter the real world.

Lex: How do you manage a company as large as NVIDIA without traditional status reports?

Jensen Huang: We prioritize the speed of information and operate with a very flat structure. I don’t want a filtered version of what’s happening. We focus on first principles and shared context so that everyone knows the mission and can move as fast as possible without waiting for permission.

Lex: Will LLMs ever actually "reason" or are they just predicting the next word?

Jensen Huang: We are moving toward "System 2" thinking, where the model can pause and "think" before it responds. By scaling compute during the inference phase—giving the model more "thought cycles"—we are seeing emergent properties where the model can plan, check its work, and solve complex problems.

Lex: How do you view the battle between closed models and open-source models?

Jensen Huang: Open source is essential for the ecosystem. It allows every researcher and company to build on top of the state-of-the-art. NVIDIA supports the open-source community because when more people are building AI, the entire "Industrial Revolution" moves faster, and that benefits everyone.

👨💻 Practical Use Case: Claude Skills

Difficulty: Mid-level

We covered Artifacts in Issue 19 and Custom GPTs in Issue 12. Skills sit in the same family but solve a different problem: what happens when you want Claude to remember how to do something across every conversation, not just one.

A skill is a reusable instruction set you upload once. It contains detailed rules, examples, formatting guides, and optional scripts. Claude loads it automatically whenever it recognizes a relevant task.

Think of it as a permanent playbook that Claude pulls off the shelf when it sees the right job.

Here's where skills differ from what we've already covered:

Artifacts (Issue 19) live and die in a single chat. You build a dashboard or calculator, and it exists in that conversation only. Skills persist across all conversations.

Custom GPTs (Issue 12) are full chatbot personas with fixed instructions. Skills are modular, your same Claude just gets smarter about specific jobs without switching to a different bot.

Skills auto-trigger. You don't invoke them. Claude reads your message, recognizes the task, and loads the right skill behind the scenes.

Skills are useful when you have a repeatable process with specific requirements. Document generation with brand templates, data analysis with company-specific metrics, client-facing reports that need to hit the same structure every time, and more.

The only problem is the iteration loop is clunky right now. There's no in-chat skill editor, you delete the old skill, upload the new file, and open a fresh conversation to test. I'd recommend getting the output right in a normal conversation first, then locking it into a skill once you're happy with it.

Learn More Below ⬇️

🐬 Startup Spotlight

Nauticus Robotics

Nauticus Robotics — Autonomous Subsea Robots.

The Problem: Subsea work (like maintaining offshore wind farms) is slow, dangerous, and expensive. It traditionally requires massive, high-emission ships and "dumb" tethered robots that need constant human babysitting.

The Solution: Nauticus builds "Aquanaut" intelligent, untethered robots. Their AI software, ToolKITT, allows these machines to perform complex tasks solo, cutting costs by up to 90% and letting companies ditch the giant support vessels entirely.

The Backstory: Founded by Nicolaus Radford, who spent 14 years leading NASA’s robotics lab (working on Valkyrie and Robonaut). He took space-grade tech and put it underwater.

My Thoughts: Smart move. With everyone building robotics for warehouses, homes, and really everywhere on land, someone needs to build for the sea. I imagine robotics will be a huge proponent in cleaning up our oceans at some point in the future.

“It’s not likely you’ll lose a job to AI. You’re going to lose the job to somebody who uses AI”

- Jensen Huang | NVIDIA CEO

It’s be pretty cool to see a 4ocean X Nauticus Robotics partnership at some point. Till Next Time,

Noah from KPAI